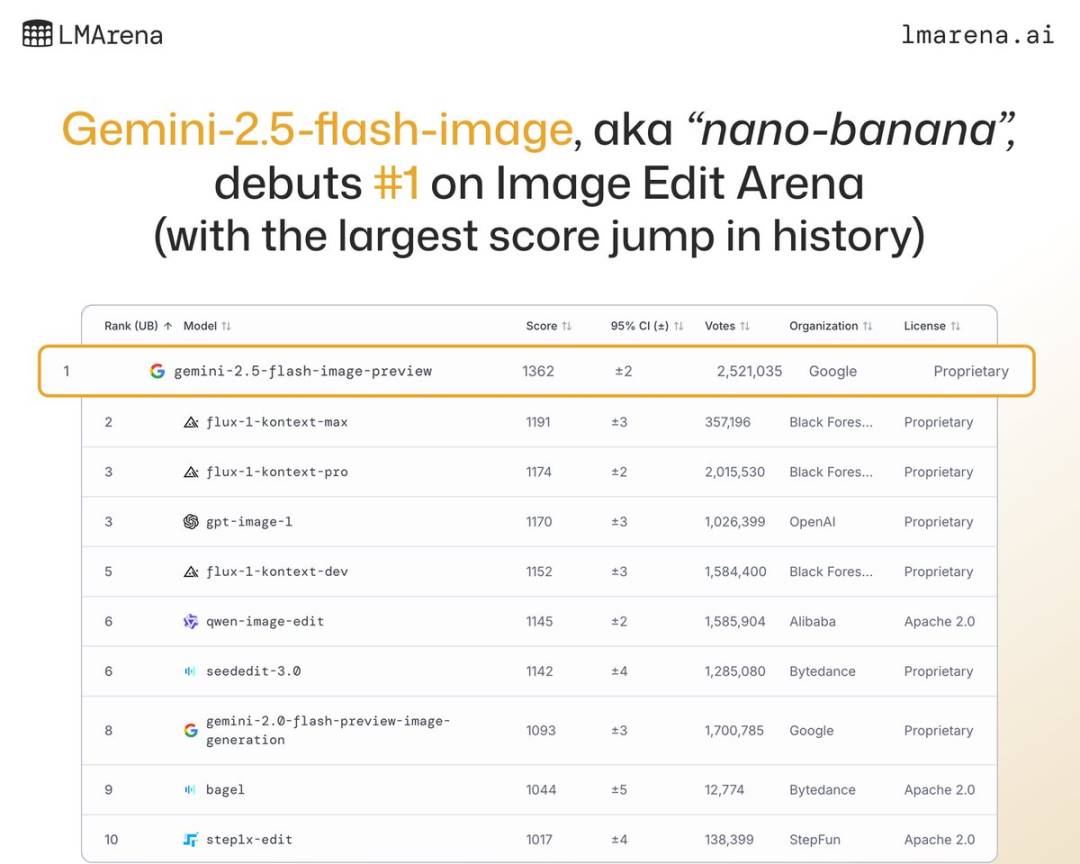

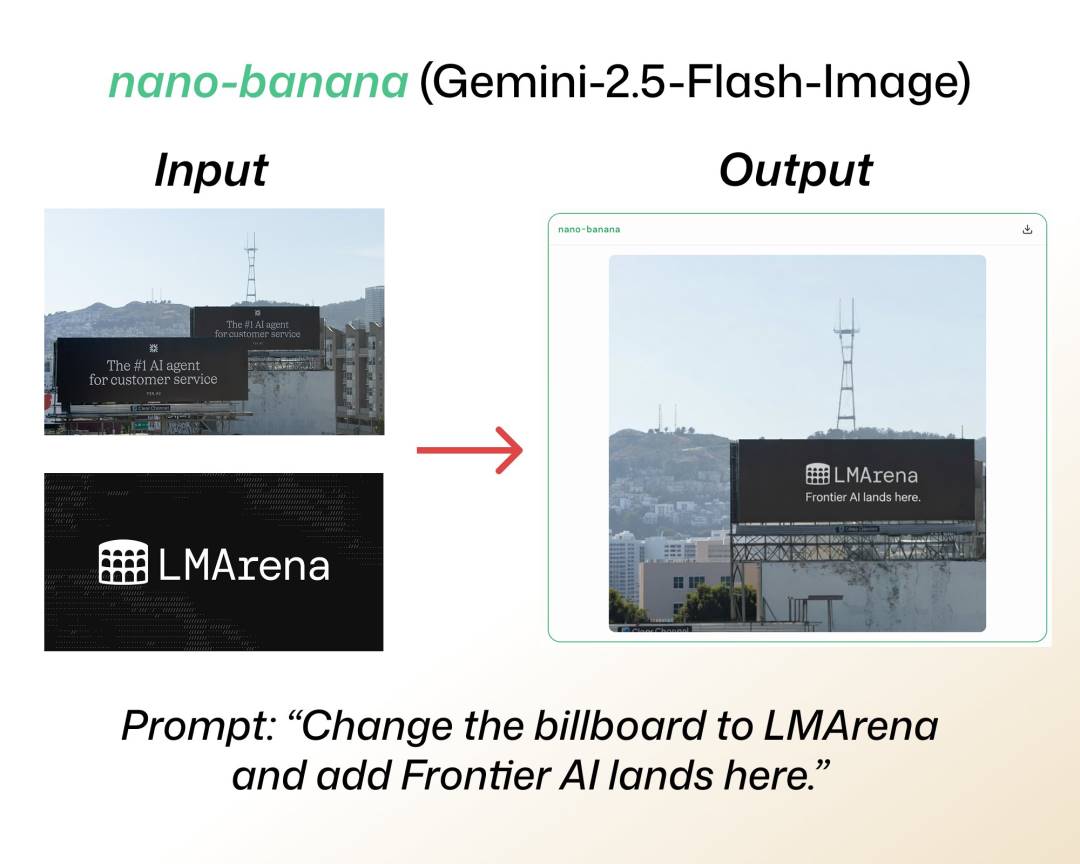

Do you remember the mysterious AI image editing model "nano-banana" that was widely discussed before? Back then, it caused quite a stir in the LMArena large language model arena with its outstanding performance. Google Gemini’s top tech experts also took turns to tease the public on social media, and it was even rumored to be the legendary Gemini 3.0 Pro.

Now, Google has finally unveiled its mystery.

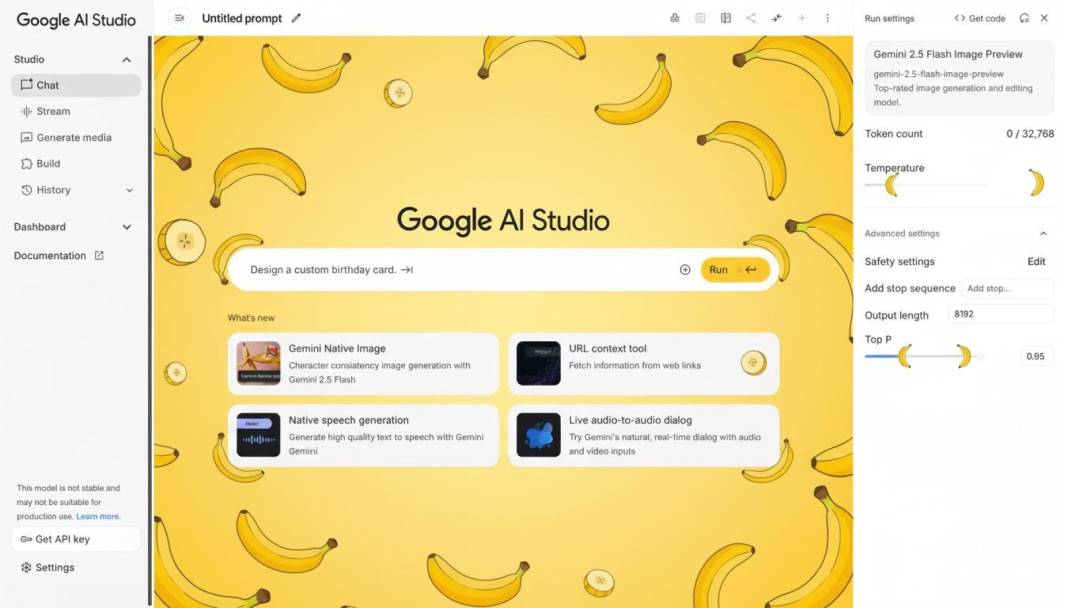

At midnight on August 27 (UTC+8), Google AI Studio officially released Gemini 2.5 Flash Image (codename nano banana) 🍌.

Gemini 2.5 Flash Image, which has been hyped for a long time, finally debuts | Image source: GeekPark

This is by far Google’s most advanced image generation and editing model. Not only is it incredibly fast—almost a "lightning-fast" experience—but it has also achieved SOTA results on multiple leaderboards, and is far ahead on LMArena.

Gemini 2.5 Flash Image achieves SOTA capabilities upon debut | Image source: LMarena.ai

In its technical blog, Google mentioned that Gemini 2.0 Flash had already won developers’ favor with its low latency and high cost-effectiveness, but users have always expected higher quality images and more powerful creative control. Gemini 2.5 Flash Image arrives with these major upgrades: role consistency is finally fully maintained, prompt-based image editing is more precise, multi-image fusion is natural and smooth, and with its understanding of real-world knowledge, it’s not just a model, but more like the "origin" that lays the foundation for the next generation of blockbuster applications.

GeekPark also experienced it at the first opportunity. Unexpectedly, this is not just a model update—it’s the first time you can truly feel that the future of AI photo editing is right before your eyes.

Google AI Studio is now open for experience | Image source: GeekPark

At first, I just wanted to have a routine experience, "see how much faster the new model is." But to my surprise, just a few hours of experience made me feel as if I had a glimpse of what the next generation of blockbuster apps will look like.

In the past, we were used to tools like MeituPic, where you just tap a button, apply a filter, and your photo becomes beautiful in no time. But Gemini 2.5 Flash Image feels completely different. It’s unbelievably fast and as smart as a designer who understands your thoughts. You just need to tell it the effect you want, and it can present the image in a few seconds.

Besides the effect, speed is another obvious difference in experience between Gemini 2.5 Flash Image and previous image generation models | Image source: GeekPark

01 Ultra-fast Generation, Results in Seconds

The most intuitive experience with nano banana is its speed. In the past, when using some open-source models, even if your computer was well-configured, it could take dozens of seconds or even longer from entering a prompt to generating a decent image. For mobile users, this waiting process was even more torturous.

But Gemini 2.5 Flash Image has lowered this threshold to just a few seconds. It is Google’s self-proclaimed "latest, fastest, and most efficient" native multimodal model, and it’s clear that a lot of effort went into optimization. In my actual tests, entering a prompt generated results in about three to four seconds (UTC+8), with both resolution and detail being quite clear.

This experience is very similar to using MeituPic for photo editing: tap the "beautify" button, and the effect is almost instant. The difference is, MeituPic uses algorithms to apply filters, while Gemini 2.5 Flash Image builds an image from scratch or makes major modifications to a photo according to your requirements. This "point and shoot" satisfaction is unimaginable with the tedious traditional photo editing process.

For needs like "removing passersby from the background," a single prompt is all it takes | Image source: GeekPark

If speed solves the experience pain point for traditional photo editing users, then "native multimodality" solves the AI image capability boundary.

Gemini 2.5 Flash Image can not only generate images, but also understand both text and image inputs at the same time. This means I can give it a photo and a text prompt simultaneously, and it will combine the information from both to understand exactly what I want.

For example, I uploaded a street photo and told it, "Change the background to a night view of Shinjuku, Tokyo." (UTC+8) It not only recognized the subject in my uploaded photo, but also accurately cut out the person and replaced the background with the neon-lit streets of Shinjuku. What’s even more impressive is that it maintained the consistency of the lighting on the person, completely avoiding the "hard cut-and-paste" effect that often occurs with manual cutouts.

This level of understanding reminds me of a function that phone manufacturers have often mentioned in their built-in photo albums in recent years—"one-click background replacement." The difference is, background replacements in the past often had blurry edges and inconsistent lighting, making the result look fake. Now, Gemini 2.5 Flash Image can use world knowledge and visual understanding to fill in these details, resulting in much more natural outcomes and far more accurate image detail retention than traditional text-to-image or image-to-image model tools.

Original image & Gemini 2.5 Flash Image generated effect | Image source: GeekPark

This is also why I think it will redefine the photo editing experience: no longer relying on a lot of manual adjustments, but using the model’s natural semantic understanding to "brute force" complete the task, especially in scenarios like portrait editing where image detail requirements are extremely high.

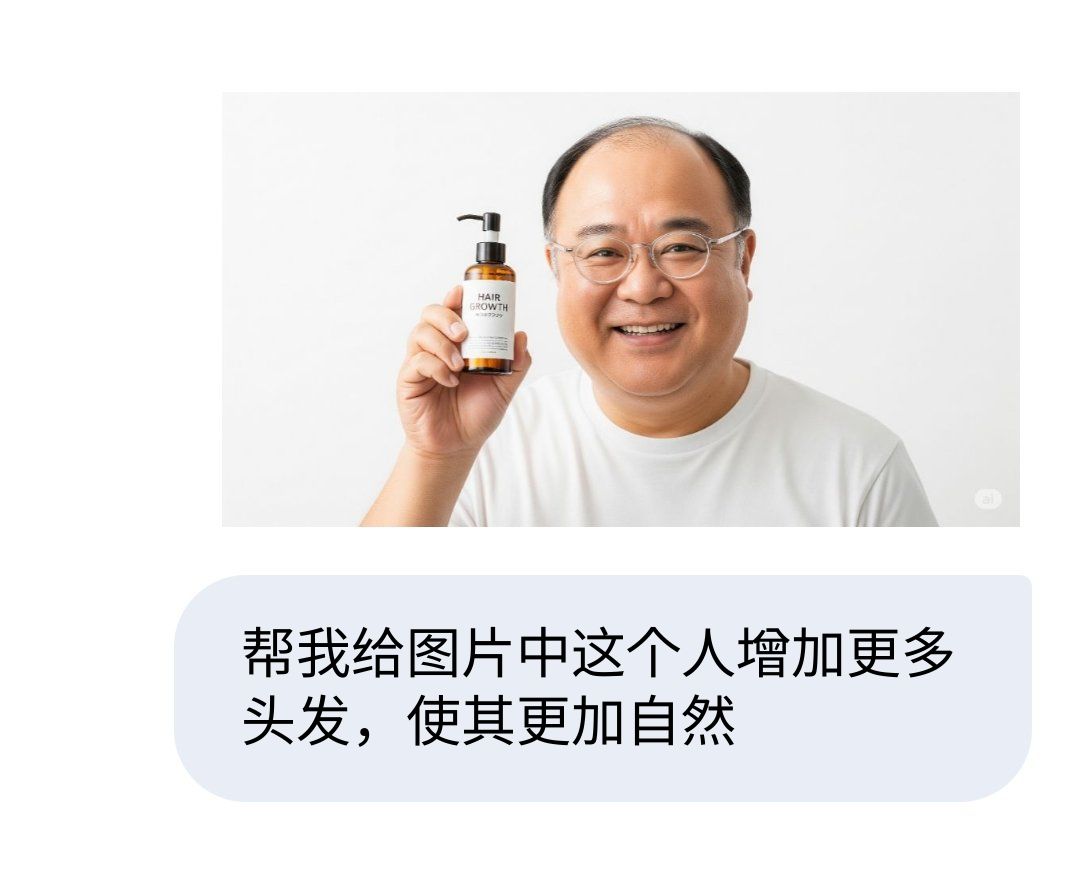

For such portrait image processing needs, Gemini 2.5 Flash Image’s role consistency truly provides an unprecedented "Vibe Photoshoping" experience.

Helping programmers "save face" in one second | Image source: GeekPark

This experience breaks many people’s previous impressions of AI image generation—"mysticism": if your prompt is good, the output is stunning; if your prompt is average, the result may be completely off.

But in Gemini 2.5 Flash Image, I found that this "mystical feeling" has been greatly reduced. Its understanding of prompts is more precise and closer to user intuition—that’s why many people suddenly find it much more useful.

For example, I told it "blur the background, highlight the foreground person" (UTC+8), and a few seconds later the generated image was exactly what I wanted; I asked it to "change the person in the photo to a smiling expression," and not only did the mouth curve up slightly, but even the eyes were adjusted, with great attention to detail; I even tried "colorize the black-and-white photo," and the resulting color image wasn’t just randomly painted, but tried to match the color atmosphere that should be in historical photos as much as possible.

This "say it, get it" ability reminds me of using MeituPic in the past, where I just wanted to smooth the skin, but the whole face turned into a "level 10 beauty filter" fake face. Now, Gemini 2.5 Flash Image’s operations are precise and restrained—it really understands what you want and tries to restore it as much as possible.

02 Enhanced Capabilities, Hard to Go Back

For a more intuitive comparison, I deliberately compared it with the mobile photo editing tools I use daily.

On Snapseed, if I want to blur the background, I usually need to spend a minute or two manually selecting the foreground area and then adjusting the blur level. Even if you’re skilled, you can’t avoid repeated modifications.

On MeituPic, although there is a one-click background blur function, it often blurs the edges of the person, making the effect unnatural.

But on Gemini 2.5 Flash Image, I just need one sentence—it automatically recognizes the boundary between the person and the background, and the blur effect is natural, with no need for further retouching.

While changing details in the image, it still avoids the "random scribbling" that previous AI tools often produced in other background areas | Image source: Twitter

This comparison actually illustrates one point: Gemini 2.5 Flash Image frees users from complex operations and hands more work over to the model. For ordinary people, it lowers the threshold for photo editing; for professionals, it saves a lot of time.

After experiencing it, my biggest feeling is that Gemini 2.5 Flash Image is no longer just a photo editing tool, but more like an "intelligent assistant."

In the past, when we used MeituPic, we were using a collection of preset functions—filters, beautify, mosaic—each button corresponding to a function. All you had to do was select and adjust step by step until you were satisfied.

Now, Gemini 2.5 Flash Image’s logic is completely different. It no longer requires you to learn the tool’s logic, but directly understands your needs. You just say it, and it does it for you.

This change seems subtle, but it fundamentally changes the relationship in the photo editing process. Before, we adapted to the tool; now, the tool adapts to us. This interaction is itself the prototype of the next generation of applications.

From today’s perspective, Gemini 2.5 Flash Image is still in its early stages, and there may still be boundaries in its functionality. But its speed, understanding, and fidelity are enough to spark the imagination for the future.

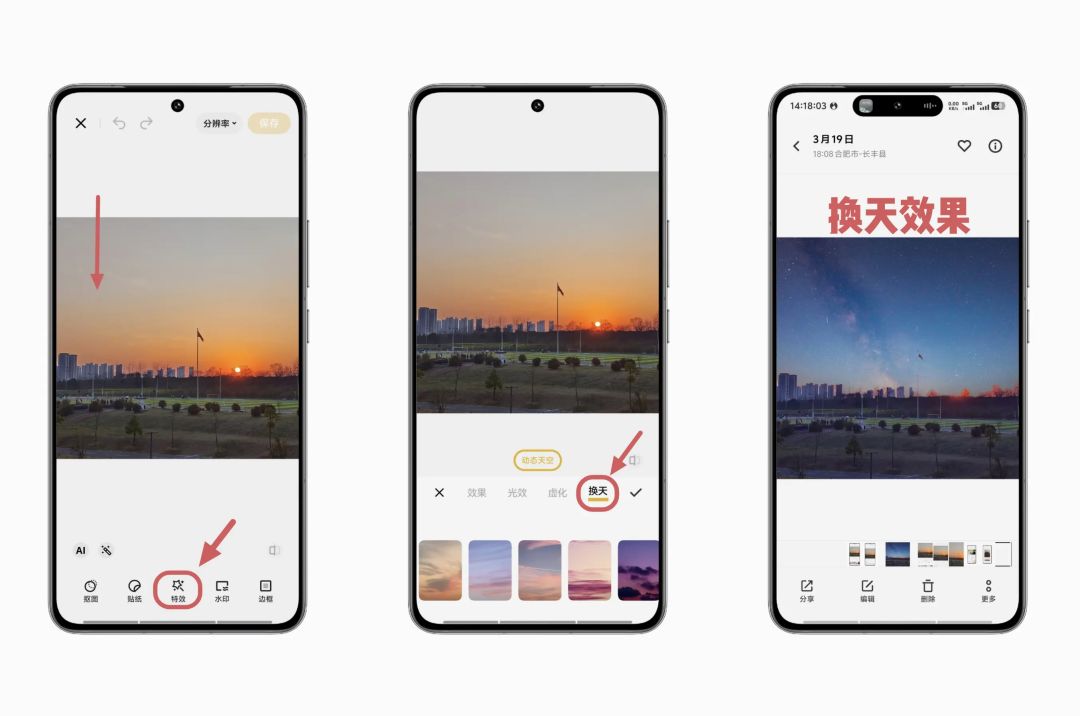

What if it were combined with MeituPic? Maybe you open the app, say to your phone, "Help me retouch this photo and make the skin look more natural," and the result is generated in a few seconds (UTC+8); maybe when traveling, you tell it, "Change the weather to sunny" (UTC+8), and the photo instantly becomes bright and sunny; or even in video editing, you can change the atmosphere of an entire clip with just one sentence.

This approach may quickly become the mainstream image editing function in mobile operating systems in the future | Image source: Twitter

This is why I think it will quickly revolutionize the current workflow of photo editing tools and define the next generation of "MeituPic": not just editing, but reshaping the way we interact with image processing, making AI your post-production photography partner.

But at present, Gemini 2.5 Flash Image still cannot serve as an out-of-the-box mass-market photo editing app: not only because its main purpose is still image generation rather than fine-tuning on existing images, but also because all images created or edited by Gemini 2.5 Flash Image will contain a SynthID digital watermark for social content platforms to identify AI-generated content.

03 The Tipping Point for Blockbusters

Looking back, the reason MeituPic once became a national app was that it solved the problem everyone wanted to solve in the simplest way—making photos look better.

And Gemini 2.5 Flash Image takes this a step further, refining complex AI capabilities into a "seconds-to-image" experience that everyone can use.

The first time I told it, "Help me blur the background" (UTC+8), and the image was naturally processed in just a few seconds, I knew in my heart: this is the origin point for blockbuster apps. It’s not just a model, but the underlying capability for countless new products in the future.

The AI one-click sky replacement function that went viral among mobile users in recent years | Image source: vivo Community

Maybe in a few years, we’ll forget the codename Banana, but we’ll see more and more image processing tools that let you "say what you want, and it’s done instantly"—and maybe, like MeituPic back then, they’ll become a shared memory for a generation of users.

Only this time, AI will push imagination even further.